Inside Hybrid Search: What Actually Happens in a Fashion Catalog

Most hybrid search stops at two layers. But this fashion search engine, powered by hybrid search, uses three to match every shopper query and free up your team.

by YesPlz.AIMay 2026

Most hybrid search stops at two layers. But this fashion search engine, powered by hybrid search, uses three to match every shopper query and free up your team.

by YesPlz.AIMay 2026

A shopper visits your site and types ‘flowy summer dress’ into your search bar. It takes less than a second. But inside your search engine, a lot has to go right for that query to return something she actually wants to buy.

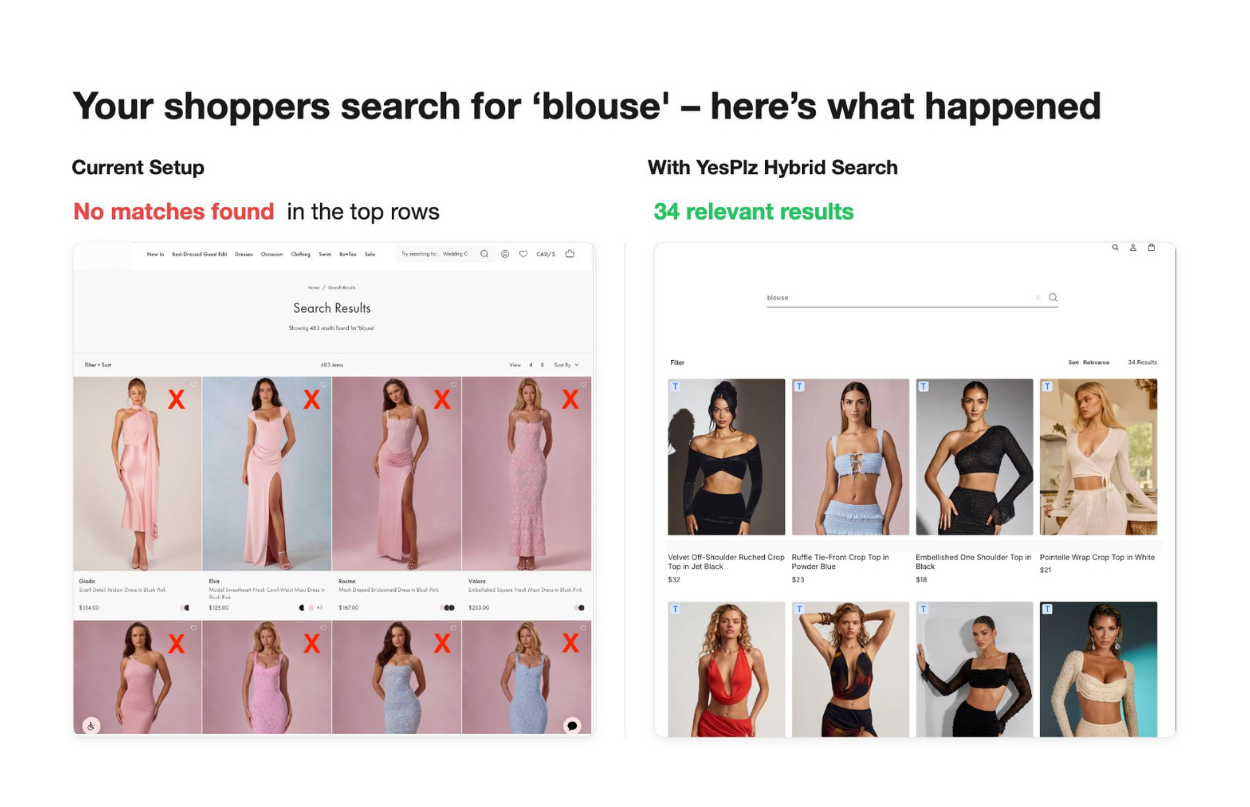

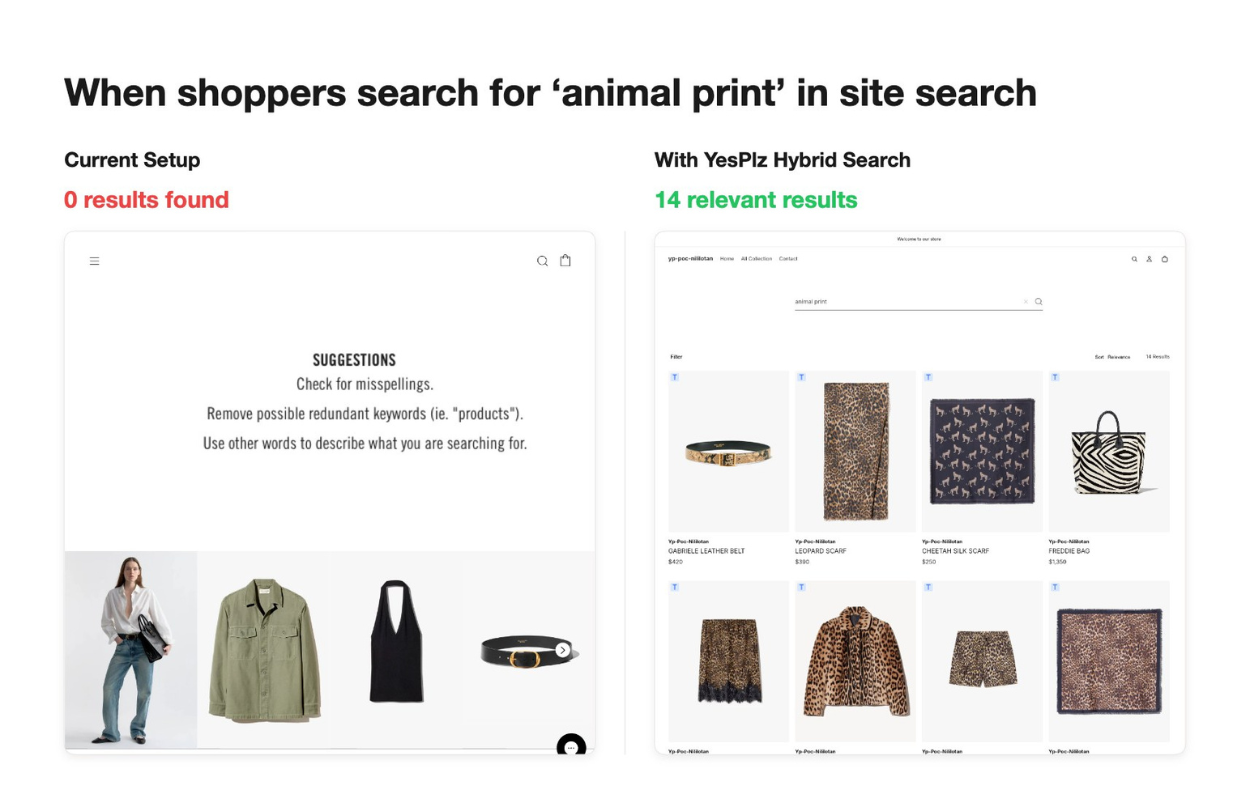

For most keyword search engines, words similar to ‘flowy’ are a problem. It is not a size, a color, or a brand name. It's a feeling — and feelings are exactly what traditional keyword search was never built to handle. This kind of word probably doesn’t appear in any of your product titles or descriptions. It isn't a tag your merchandising team entered into your product catalog.

This is where most fashion eCommerce teams struggle. You pull the zero-result reports every Monday morning. You chase your team to retag products. And, you try to predict what shoppers will search for next and update titles, descriptions, and tags accordingly. It's a manual process with no finish line. Hybrid search was built to close that gap.

This article breaks down exactly what's happening inside your search bar when a shopper types a query. You also learn the three layers that make the YesPlz AI fashion search engine work, how they interact in real time, and what it means for your team's day-to-day workload.

In our article on what hybrid search is, you learned that at its most basic level, hybrid search combines two retrieval methods: keyword search and semantic search. Many vendors claim to offer this AI-powered search technology. And technically, most of them do.

But the semantic half of most hybrid search systems is often powered by a general-purpose AI model. It is trained on broad internet data, not on fashion catalogs. It is the same model used for searching furniture catalogs, B2B software documentation, electronics reviews, etc. It knows language. But it doesn't know fashion.

This matters more in fashion than in almost any other category. Trend-driven queries, vague descriptive language, and typos make up a significant share of your total search volume. A general-purpose model wasn’t built to handle any of them well.

Closing that gap is what a fashion search engine is designed to do. It takes three layers to get there.

YesPlz AI Fashion Search Engine: The Three Layers of Hybrid Search

YesPlz AI Fashion Search Engine: The Three Layers of Hybrid SearchYesPlz AI hybrid search engine doesn’t run on two layers, it runs on three. Each one covers what the other can’t. In this section, you’ll explore what each layer does, where it wins, and where it would fall short without the others.

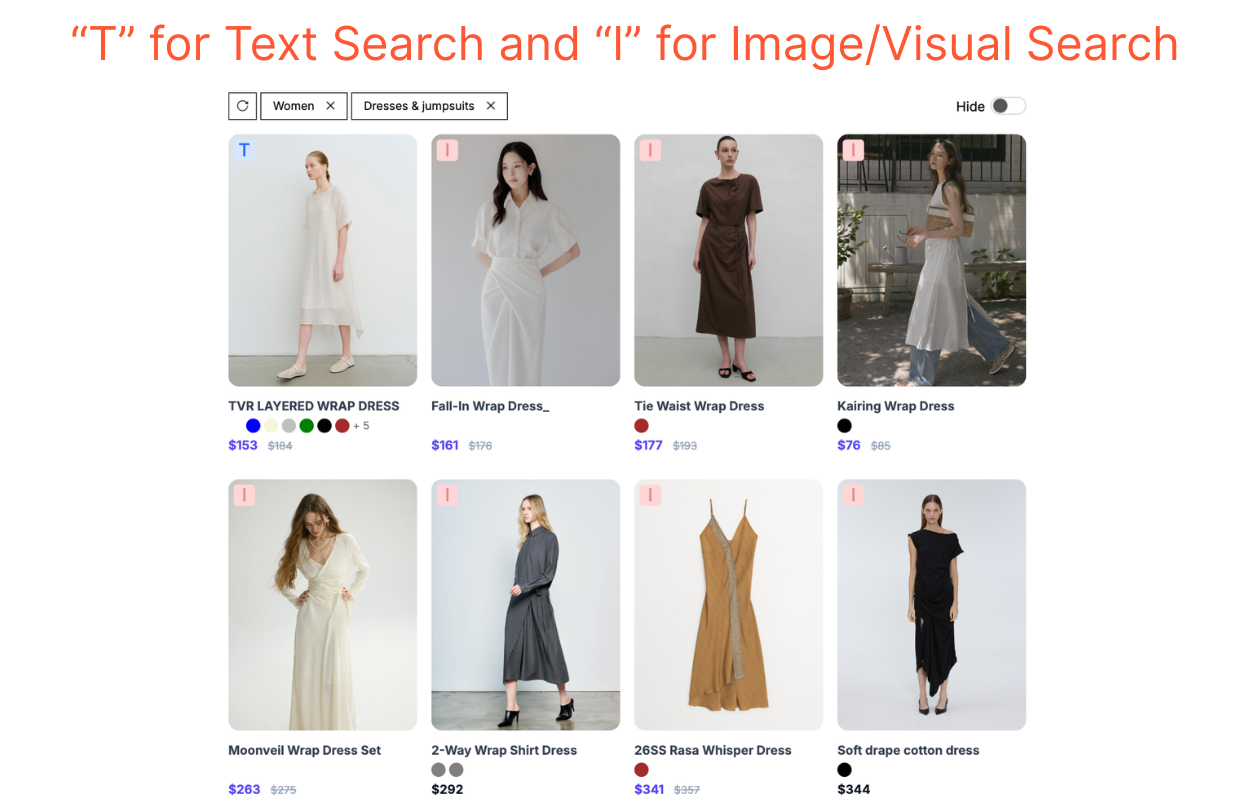

Text search is the foundation. When a shopper types a query, the text layer scans your product titles, descriptions, tags, and metadata for exact matches. It's fast, reliable, and essential for queries about brand names, SKUs, categories, or specific attributes like colors and sizes. For example, the text layer can handle ‘black hoodie’ or ‘wide leg pants’ with precision.

But precision is also its ceiling. The moment a query steps outside your tag library, the text layer runs out of road. ‘Flowy,’ ‘old money,’ ‘Y2K,’ ‘90s fashion,’ — if those words don't appear somewhere in your catalog data, the text layer returns nothing. And your catalog can't possibly keep up with every new word your shoppers invent.

What this means for fashion retailers: The text layer is what your team has been maintaining manually. You frequently pull zero-result reports, add synonyms, and update product titles and descriptions. A well-built text layer with automatic typo correction and synonym management reduces that burden significantly. But it still can't work alone.

This is where the fashion search engine starts to separate itself from a generic hybrid search solution. Instead of relying solely on what was input into your product data fields, the image tagging layer reads your product images directly.

It scans each image and extracts fashion-specific attributes: silhouette, neckline, sleeve length, fabric texture, pattern, occasion signals, aesthetic vibe, etc. This matters because most fashion catalogs are under-tagged.

Let’s say you upload a product image titled ‘women’s top.’ To the image tagging layer, it’s a flowy, V-neck, floral-print blouse with a boho silhouette. But with the text layer, these attributes are invisible since it can’t see or read them.

What this means for fashion retailers: Instead of manually tagging new SKUs before they go live, this layer helps you automatically tag product images at upload. Your products are therefore searchable from day one. You become less dependent on supplier data quality. Your site search remains functional even as your catalog grows faster than your bandwidth can keep up with.

When hybrid search — the text layer and the image tagging layer — misses, the visual search layer picks up the slack.

Consider a ‘short skirt’ example. Hybrid search scans your product titles, descriptions, and tags for the exact word ‘short.’ But your product data might use other words, for instance, ‘mini’ instead of ‘short.’ And your product tags don’t contain a length attribute at all.

That’s where the third layer - the fashion embedding layer - steps in. It looks directly at your product images, recognizes the hemline, and surfaces the right options. No exact keyword is required. This is possible because of how the fashion embedding model is built.

Generic embedding models are trained on broad data. They can recognize a fashion item in an image as a skirt. But they can't tell a pencil silhouette from an A-line, or pick up on drape, hemline, or fabric weight. Those distinctions simply weren't in their training data.

Fashion embeddings — like the one we use at YesPlz AI — are trained specifically on fashion data, both images and text. The model learns what fashion shoppers actually care about: cut, fit, length, fabric, style, and so on.

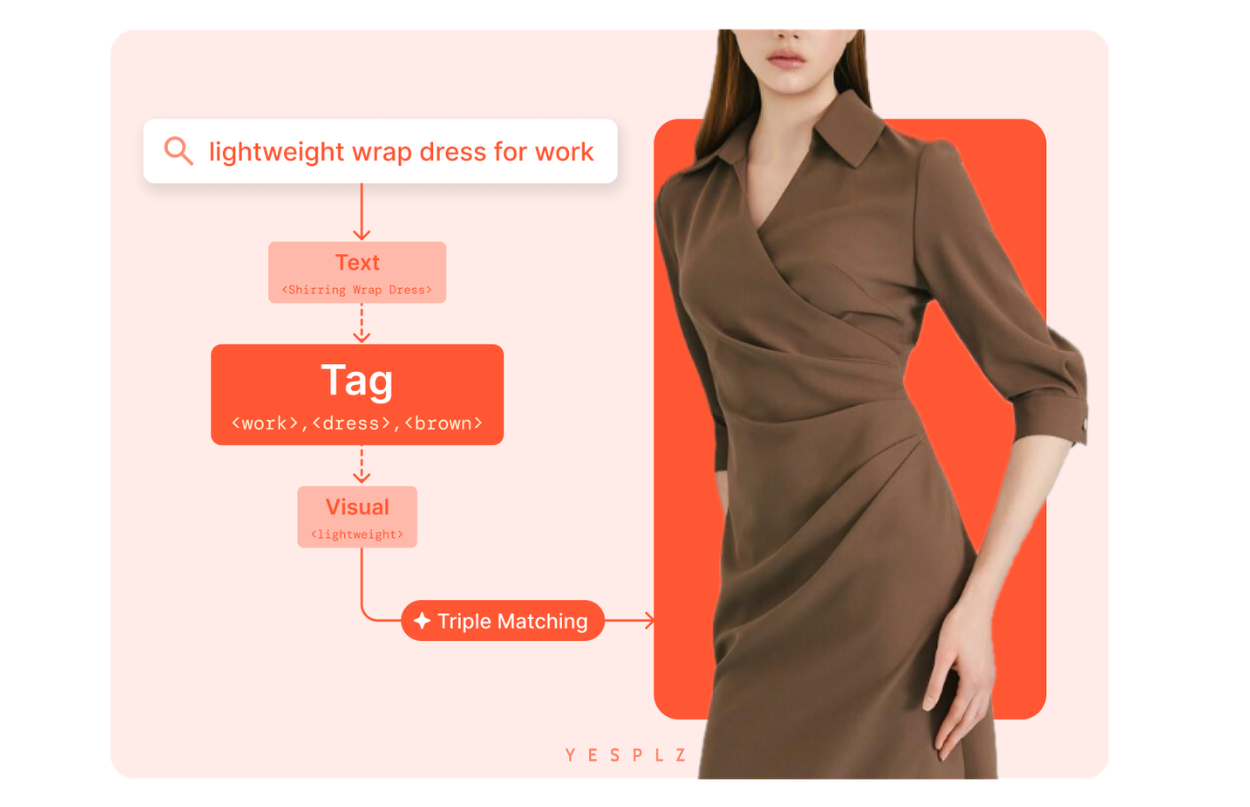

Understanding each layer individually is one thing. Seeing how they interact in real time is another. Let's walk through a single query — ‘lightweight wrap dress for work’ — to see how three layers work simultaneously.

Layer 1: Text Search

Layer 1: Text SearchThe text layer focuses on what it can match correctly. It scans your catalog to find products with the words ‘wrap dress.’ This process is fast and reliable. But the words ‘lightweight’ and ‘for work’ stop it in its tracks. These words almost certainly don’t appear in any product titles, descriptions, or tags. So the text layer passes them on.

The image tagging layer picks up where the text layer left off. It tags all images in your catalog with their specific attributes. Even if ‘for work’ and ‘lightweight’ don't appear anywhere in your product data, the tagging layer recognizes them from the images:

The collar, the structured silhouette, and the polished finish signal ‘for work.’

The fluid drape and the way the fabric falls signal ‘lightweight.’

The fashion embedding layer handles what neither text nor tags could fully capture on their own. It maps the full query to products that match not just individual attributes, but the overall intent behind the search.

All three layers — text, tag, and visual — are combined into a single ranked result. Products that score across multiple layers rise to the top. What the shopper sees are dresses that are exactly as she imagined when she searched for a lightweight wrap dress for work.

Now, let’s consider what breaks when any single layer is missing:

No text layer: ‘Wrap dress’ has no anchor. The results drift too far from the product type she was looking for. (When it comes to matching the exact words, nothing is better than text search.)

No image tagging: ‘For work’ and ‘lightweight’ — two of the most descriptive words in the query — have no match anywhere in your catalog data. (Image tagging helps enrich product data.)

No fashion embedding: Without a fashion-trained embedding model, what the text and image tagging layers can't do well remains unsolved.

What Does This Mean for Your Team's Workload?

What Does This Mean for Your Team's Workload?YesPlz AI fashion search engine shifts the nature of your work. Right now, a significant portion of your week is maintenance. Zero-result reports. Synonym management. Product manual tagging. With a three-layer hybrid search system in place, that maintenance burden drops considerably. But two things make YesPlz's approach go even further.

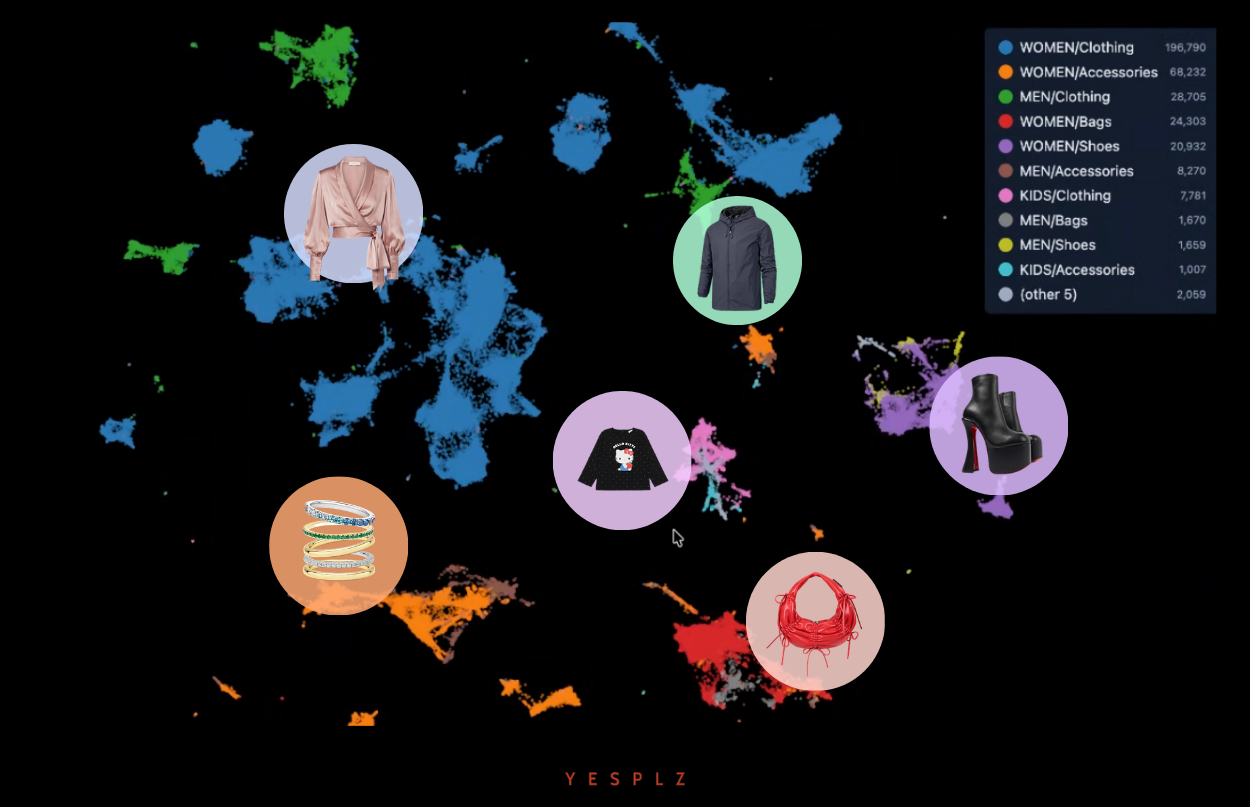

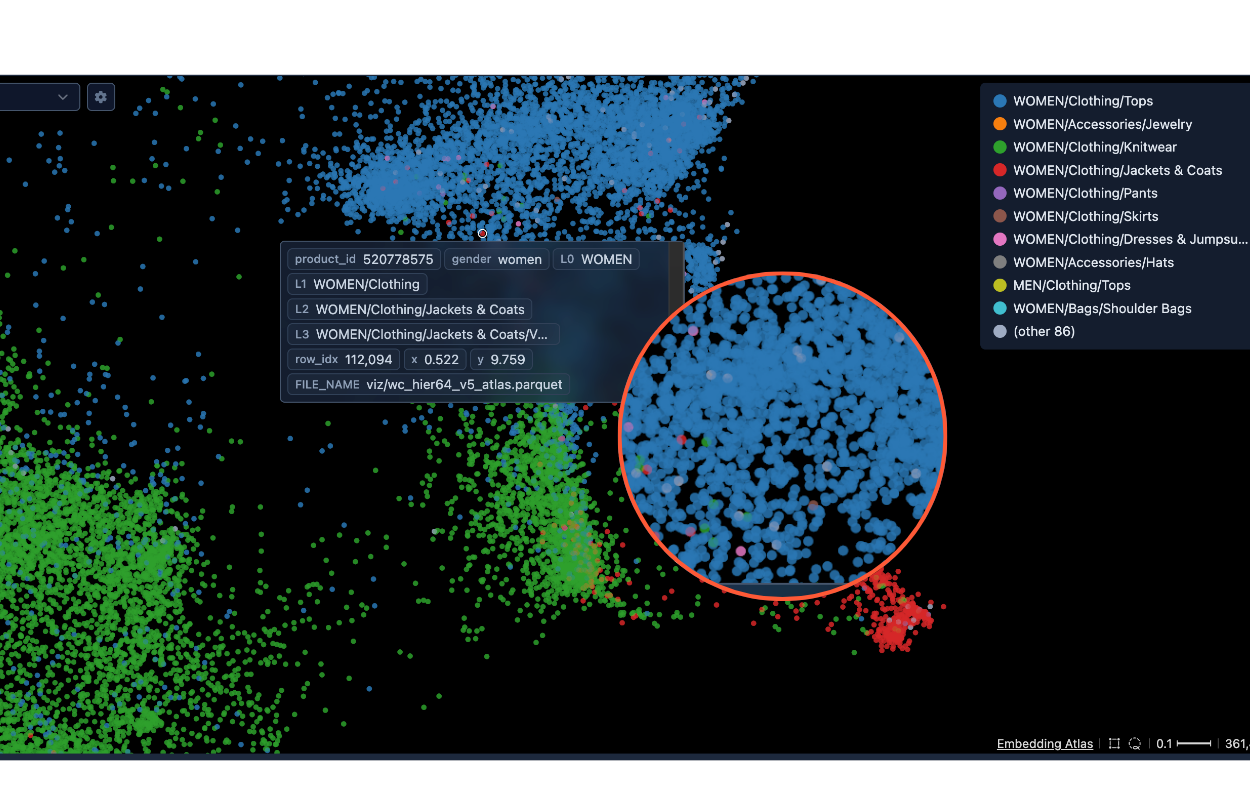

The image below shows a visualization of our fashion embedding training process. Each colored cluster represents a category — women's tops, knitwear, jackets and coats, jewelry, and more. The closer the two dots are, the more similar those products are in the model's understanding of fashion.

The YesPlz team continuously scrutinizes and retrains the model to sharpen that understanding — not just at the broad category level, but down to granular fashion attributes. The result is a model that thinks the way a fashion buyer does, not the way a search index does.

The YesPlz team continuously scrutinizes and retrains the model to sharpen that understanding — not just at the broad category level, but down to granular fashion attributes. The result is a model that thinks the way a fashion buyer does, not the way a search index does.

Specifically, we train our own fashion embedding model on both text and image data. That means our model learns how fashion actually works:

How the same product gets described differently

How trends relate to each other

How aesthetics overlap

For your merchandising team, this means one less thing to manage. You don't need to account for every possible way a shopper might search for products. It handles the variation, so your team doesn't have to.

This is where your day-to-day workload shift becomes most tangible to your team. Instead of you watching the zero-result dashboard, the Search Tune Agent does it for you. It continuously monitors search queries, click-throughs, and conversion patterns across your site.

When the Search Tune Agent detects a new trending keyword, an emerging shopper phrase, or a gap between what shoppers are searching for and what your catalog surfaces, it acts automatically, updating keyword variations, synonym mappings, and intent connections without anyone on your team touching a thing.

When the Search Tune Agent detects a new trending keyword, an emerging shopper phrase, or a gap between what shoppers are searching for and what your catalog surfaces, it acts automatically, updating keyword variations, synonym mappings, and intent connections without anyone on your team touching a thing.

What your team gets back is time for the work that actually requires human judgment — curation, merchandising strategy, deciding what gets featured, promoted, or pinned. The system handles retrieval and maintenance. Your team handles the decisions that no algorithm can make for you.

That's the shift worth paying attention to: from search maintenance to search strategy.

If you're weighing up hybrid search vendors, here are the questions that will tell you what you actually need to know.

1. Is your embedding model fashion-specific, or general-purpose?

A general-purpose model doesn't understand fashion vocabulary, trend relationships, or aesthetic overlaps. Ask vendors directly how their model was trained and on what data.

2. Does your system read product images, or only product text?

Image tagging is what makes search resilient to thin catalog data. If the system relies entirely on what's input into your product fields, it's only as good as your suppliers' data entry.

3. How does your system handle new trending terms?

If the answer involves your team manually adding synonym rules or updating tags, the maintenance burden hasn't gone away. An autonomous system detects and maps new terms without human intervention.

4. How much ongoing maintenance does your team need to do?

If the answer involves weekly keyword reports, manual synonym management, or regular retagging cycles, the system is not truly autonomous. Your team workload hasn't changed.

Want to see how hybrid search performs against your actual catalog — your products, your shoppers, your queries? Book a demo with YesPlz and see the three layers working together in real time.

Written by YesPlz.AI

We build the next gen visual search & recommendation for online fashion retailers

Most search engines understand words. Hybrid search understands searchers. Here's what hybrid search is and why it's becoming the new standard in eCommerce.

by YesPlz.AI

Complete the Look, Shop the Look, Style With, or Bundle? Find out which outfit recommendation type fits your catalog, imagery, and conversion goal.

by YesPlz.AI